Wait and see.

That’s the summary of my thoughts for Apple’s World Wide Developer Conference this year. That event happens next week. The waiting and seeing will happen over the summer, possibly all the way until this time next year.

I’m not talking about what Apple will talk about at the conference and in the keynote. I’m talking about what they actually deliver down the road and when they deliver it for the numerous operating systems with 27 tagged on at the end. Some will get love. Some won’t. That’s nothing new. (Ask iPad users.) For a company with so many resources each year’s releases always seem to curiously remind me that Apple picks a platform to focus on, and seems content to let others wither on the vine for a time.

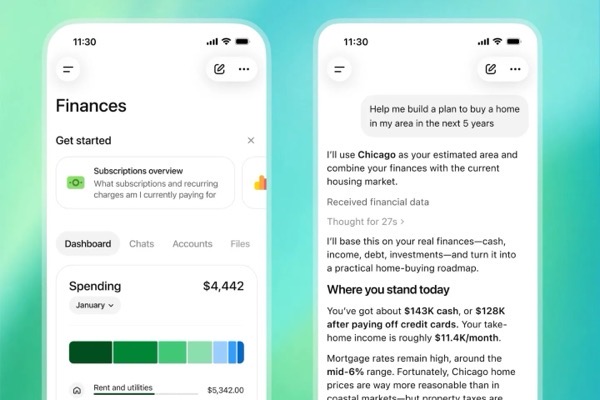

To be fair, I’ve always had a wait and see attitude towards most tech announcements, because what looks sexy and is hyped in the demos, press releases, and podcasts, sometimes makes it into the hands of users, sometimes not. Prior to 2024, Apple usually delivered on what it promised. But that changed with Apple Intelligence. A name I bet they wish they’d never let see the light of day. They didn’t deliver then and haven’t since. They promise they will this time, although who knows what that will really mean. The weight of wait and see has become heavier.

This also comes at a time when there’s a burgeoning backlash building among consumers against anything related to Artificial Intelligence. So the climate is not nearly as inviting as it was two years ago. For what’s it worth, I think it’s becoming harder and harder to sell any product or feature that guarantees its inaccuracy up front, promising to do better in the future. But there sure seem to be enough takers and talkers thinking different on that than I.

Curiously, Apple seems to be hedging its bets, apparently set on using Google’s AI as the foundation. Some say that’s a place holder until Apple rolls its own AI after it’s mistaken first rollout, the way it did with Maps. Some say it’s a wise move for the future because it might save Cupertino some cash and more blowback from having to build out its own data centers. I say, wait and see.

From what I read Google may be reinventing itself and the Internet with its efforts, but those efforts aren’t meeting with thunderous applause and accolades. At least compared to Google’s competitors. Besides effectively putting Google in the catbird (chatbot?) seat driving all AI chatbot activity on the majority of handsets sold on the planet (Android and iOS), who knows how it will turn out. No chatbot can predict, nor can any human. That said, the marketing puzzle about what’s Apple and what’s Google is going to be fun to watch play out, even in the end it’s going to be meaningless to most consumers.

There’s also word that this year’s OS releases will also focus on fixes and not new features the way Snow Leopard did for Leopard on Macs back in the day. Intriguingly there were quite a new features for Macs in that release. Speaking of Macs, there’s also talk that they will see more of Liquid Glass than we saw the first time around. To be honest, I’m grateful for last year’s comparative neglect of Liquid Glass on the Mac. I’m waiting and seeing with a bit of trepidation how attention is focused on that this year. I’m also waiting on the day when someone finds a way to sell me on rounded corners on rectangular displays.

I would welcome fixes. Boy, would I welcome fixes. I’ve long maintained that the cadence of Apple’s OS release cycles is too rapid to allow it to effectively address problems. I get that there’s a long view and a necessity to look ahead, but when you’re hearing leaks about the next year’s efforts before this year’s are announced I think the tempo is too fast and it becomes too tempting to push things off until the next year.

I’ve written about a number of things that bug me off and on. Because they bug me off and on. I’ll list some that stand out that I wish would get attention. That said, most seem to fall back on issues with iCloud. Hearing talk that however Apple rolls out the new Siri or chatbot feature will allow that feature’s chat history to sync across devices via iCloud gives me a shutter. I’m guessing that will lead to more unexplainable stops and stutters in iCloud syncing in general.

So, here’s a small list of things I hope, but am not counting on, seeing addressed.

iCloud syncing. Just make it reliable and give us Sync Now buttons. We get one is Messages. How about the rest of the core apps?

Perpetual Betas: I know, and respect that Apple is continuing to work on each new operating system throughout the year. Kudos. It can’t be easy. That said, find a way to keep from mucking things up on the backend for users who don’t participate in betas. Perpetual beta weirdness is hell for normal users.

Phone app. Apple made significant changes last year. They need to make more. There’s no reason in the world I can see for not going all in to help users more efficiently get rid of unwanted or fraudulent calls.

Error Messages. Tell us more. Yes, I know something failed. Tell me more about what failed and point to a solution or information that can help me find out more.

Apple Mail. Rules in Apple Mail need to work consistently, or just be done away with. Features in Apple Mail on iPhones and Macs need to be brought into line with each other. It makes a mockery trying to unify things between iOS and macOS.

Shortcuts. Yesterday’s future is probably some tomorrow’s further fading feature. Shortcuts are great when Apple doesn’t change things behind the scenes that cause them to break. That happens too often. Rumors that you’ll be able to create them via a chatbot sounds potentially promising. But if they are still going to randomly break, what really is the point?

Contacts. A small amount of attention could do wonders with this seemingly forgotten, yet essential app.

Apple Music on the Mac. Why is this app so bad for a company that says over and over again that it loves music?

Reminder Notifications for Shared Reminders. There has to be a way to programmatically dismiss a shared reminder notification once it has been completed and marked off. Fix it. It is just simply annoying. Especially in the context of all of the improvements in the Reminders app the last few years.

App Store. For a company that spends untold amounts of money on its brick and mortar stores, I remain shocked at how they can be proud of the software versions of any of its App Stores.

watchOS Software UI: We’ve already heard there won’t be much in the way of changes for the Apple Watch this year. But at least pay attention to some of the software design.

Settings. Find a way to clean up this mess. There has to be a way.

Note that many of the issues listed above are still hanging around and are the same as in my list last year.

As much as the attention will be on whatever Apple attempts with Apple Intelligence after WWDC 26, attention will also quickly pivot to the fall when new devices are announced. Given that we’ve heard countless times that devices like Apple TV and HomePods, and other home related products, are waiting in the wings for software focused on AI features to catch up, it will be curious to see what attention, if any, they get during WWDC. I don’t think those devices will be announced until the fall. I don’t expect any hardware announcements of any kind next week.

Speaking of waiting in the wings, much will also be made about this being Tim Cook’s final WWDC as CEO with John Ternus due to take spotlight this September. Much attention will be paid to the semiotics surrounding all of that during WWDC and after. That will be interesting to watch, but since WWDC 2026 feels more and more like a catch up year all around, I’m guessing next year’s event might be more telling. We’ll have to wait and see.

So, there’s my thoughts. That and nickel won’t buy you anything.

You can also find more of my writings on a variety of topics on Medium at this link, including in the publications Ellemeno and Rome. I can also be found on social media under my name as above. This site does not use affilate links.