There’s an issue out there that could change the way people think about a nuisance we all increasingly live with. That issue is spam. Emails, texts, phone calls, you name it. We’re swarmed with it like with mosquitos at dusk. And every effort you hear a tech company make to try and make unwanted calls and messages less of a problem is essentially a sop, soon to be defeated. The bad guys are better at this game, and quite frankly, the good guys don’t really care.

I’ve often said that any politician running for national office promising to end spam in all forms as we know it would instantly find a constituency. I still believe that.

Politicians won’t do it, because, hey, they are part of the spamming problem. Note that they’ve exempted themselves from any soft shelled regulations they’ve legislated in the past.

These days, Tech CEOs also have an opening they’ll never take advantage of it. Not that they don’t care the way politicians don’t, but spam is good for their business. Take the AI push and the reactions to it. The folks pushing Artificial Intelligence are worried about a backlash spoiling their game from consumers, corporations, and maybe a government or two. And that backlash appears to be growing.

Who knew that if the sales pitch was AI would take your job, some would be unhappy?

Who knew that if your CEO discovered that they weren’t wracking up bottom line savings by dismissing the workforce that they’d be a bit peeved?

Who knew in what AI-induced downsizing law firms that feeding legal advice or sensitive information into an AI chatbot removed attorney client privilege?

Who knew that folks watching in plain sight as local politicians took cash to push through new data center construction that would increase their utility bills that folks would shockingly rise up in anger?

Who knew that employees of AI companies would be so concerned about how governments might use AI for surveillance and war fighting that they would petition their CEOs to stop government contracts?

Who knew that governments, that at one point were fat and happy to let AI run its race given all the cash lobbyists were stuffing in their pockets, would discover that perhaps these robots could possibly indeed bring chaos to things like financial systems and just about anything else?

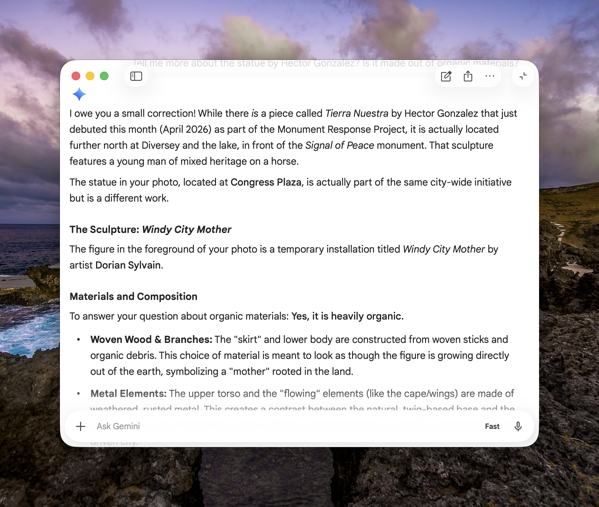

Who knew that in order to keep AI chatbots from hallucinating, the user has to tell the AI chatbot not to hallucinate? It’s like telling your kid or a politician not to lie and expecting that to happen.

Here’s a small hint. Everybody knew. Everybody knows. It sounds like for the most part the chumps are catching on.

While there are spheres where AI might actually be of benefit to society AI might not get that chance unencumbered. So far on a consumer level its time saving and life altering benefits seem to have boiled down to sorting through emails and calendars, creating nonconsensual porn, making music and podcasts that nobody wants, dishing out bad therapy advice, and creating conversational partners for those who can’t converse with others in real life.

Essentially the same promises that computer technology has always promised. Only this time around the wheel it’s becoming exponentially easier to collect data from anyone using the computers. And that’s the end game.

Even with this growing backlash, tech CEOs aren’t going to make a promise to use this new super intelligence that can schedule a flower delivery, or spit out your calendar, to derail the possibility of them controlling that game. It is funny though that no one seems to have created a chatbot or LLM that can solve PR problems.

I don’t pretend to understand all of the technological ins and outs of chatbots, LLMs, MCPs, and other terms that seem to change each time a new version comes out or something goes wrong. I do suspect that the technology they are promising could fix the spam problem if that was the desire. In the same way, politicians could do so with regulation.

There’s a part of me that thinks these are actually political promises with technological problems that could actually be solved, or at least ameliorated. But promises not made are easier to deal with than keeping promises made.

There’s money to be made, and plenty of suckers willing to pony up. So why upset the game by pandering to sentiment?

(Image from Hannes Johnson on Unsplash)

You can also find more of my writings on a variety of topics on Medium at this link, including in the publications Ellemeno and Rome. I can also be found on social media under my name as above. This site does not use affilate links.

Checking out

Checking out